Warcraft

Penelitian Teknologi Informasi (TI) cukup berbeda dengan penelitian di bidang sosial kemasyarakatan. Umumnya penelitian TI tidak mempunyai metodelogi yang jelas, tidak ada pembuatan kuesioner, tidak ada pengolahan data dan hanya sedikit yang mencakup analisa hasil. Penelitian di bidang TI, sepanjang yang pernah saya amati, bisa mencakup beberapa jenis penelitian termasuk:

- Penelitian Murni TI: Penelitian jenis ini merupakan penelitian yang berusaha

memecahkan permasalahan-permasalahan yang muncul terkait bidang TI dengan mencari solusi-solusi yang bersifat fundamental. Umumnya penelitian ini banyak berkecimpung mempelajari teori-teori yang ada untuk dapat mengembangkan teori-teori fundamental terkait lainnya. Beberapa penelitian yang bisa termasuk di dalam cakupan ini antara lain pengembangan:- Metodologi pengembangan sistem informasi

- Metodologi pembuatan data warehouse

- Metode-metode data mining/soft-computing

- Konsep jaringan

- Metode searching

- Teori Optimasi

- Metode Pemilihan Variabel

- Sistem keamanan jaringan

- Metode enkripsi dekripsi

- Bahasa pemrograman

- Metode penyimpan data

- Metode pengolahan citra

- Metode pengenalan pola

- Among others

- Penelitian Terapan TI: Penelitian terapan di bidang TI lebih mengacu pada penelitian yang memanfaatkan teori atau metode, yang telah dikembangkan orang lain dalam cakupan penelitian murni TI, di dalam pengembangan penelitian lanjutan. Beberapa penelitian yang bisa dimasukkan di dalam cakupan penelitian ini antara lain pengembangan:

- Sistem kontrol berbasis soft-computing

- Hardware yang menerapkan metode penyimpanan data baru

- Metode analisa kedokteran berbasis soft-computing

- Penelitian yang membandingkan antara teori/metode

- Sistem operasi yang berbasis open source

- Sistem database dengan sistem indexing data baru

- Metode peningkatan efektifitas jaringan berbasis data mining

- Sistem pencarian dengan metode searching baru

- Word processing dengan metode spell checker baru

- Sistem database dengan metode penyimpan data baru

- Aplikasi pengolahan citra dengan metode pengolahan baru

- Aplikasi pemodelan data yang mengakomodasi metode baru

- Program-program (DLL atau JSP) untuk metode tertentu

- Bioinformatics dan Biomedik

- Penerapan Metode TI di Bidang Lain (Ekonomi, Sosial dll)

- Among others

- Penelitian Pengembangan Sistem: Sistem yang dimaksud di sini merefer pada sistem yang dapat dipergunakan langsung oleh pengguna seperti sistem informasi dan sistem jaringan. Penelitian jenis ini umumnya berusaha menerapkan berbagai teori atau metode yang telah dikembangkan baik dalam cakupan penelitian murni maupun penelitian terapan seperti sistem database, bahasa pemrograman, konsep jaringan dan lain-lain. Penelitian yang tercakup umumnya mencakup pengembangan sistem untuk tujuan perorangan/komunitas tertentu seperti pengembangan:

- Sistem informasi keuangan

- Sistem pakar

- Sistem pendukung keputusan

- Sistem data warehouse

- Sistem digital library

- Sistem mobile dictionary

- Sistem jaringan berbasis open source

- Among others

Dibandingkan dengan penelitian murni dan terapan bidang TI, penelitian jenis ini sekarang ini kelihatannya masih lebih banyak diminati oleh mahasiswa TI Indonesia dalam proses penyelesaian kegiatan belajar mereka. Penelitian jenis ini juga sudah jelas tata cara pelaksanaannya, karena metodologi pengembangan sistem umumnya sudah pernah diusulkan dalam tahapan penelitian murni.

- Penelitian Terkait Penggunaan dan Manajemen TI: Belakangan ini, dengan berkembangnya penerapan TI di masyarakat, keilmuan tentang efektivitas penggunaan dan keilmuan di bidang manajemen TI juga semakin berkembang. Penelitian terkait dengan keilmuan-keilmuan tersebut juga banyak dilakukan. Walaupun masih dalam ruang lingkup TI, penelitian jenis ini mungkin lebih banyak dikaitkan dengan penelitian bidang sosial kemasyarakatan, karena yang menjadi objek penelitian biasanya adalah user/pengguna TI, administrator TI atau provider TI. Sehingga kemungkinan untuk menerapkan metodologi penelitian seperti halnya penelitian di bidang sosial kemasyarakatan sangat besar.

Mungkin ada yang masih memperdebatkan apakah kegiatan pengembangan sistem termasuk sebagai suatu kegiatan penelitian atau tidak. Kalau dilihat dari definisi dari kata penelitian (research) itu sendiri yaitu:

Research is a human activity based on intellectual investigation and is aimed at discovering, interpreting, and revising human knowledge on different aspects of the world. Research can use the scientific method, but need not do so.(sumber: http://en.wikipedia.org/wiki/Research)

kegiatan penelitian pada hakekatnya mempunyai tujuan untuk menemukan, menginterpretasikan ataupun merevisi pengetahuan yang ada di masyarakat. Sehingga, penelitian yang melibatkan kegiatan pengembangan sistem, karena tidak mencakup unsur menemukan, menginterpretasikan ataupun merevisi pengetahuan masyarakat, memang masih bisa menjadi bahan perdebatan apakah kegiatan tersebut bisa dimasukkan ke dalam kegiatan penelitian bidang TI atau tidak.

Diposkan oleh Lord Adi_smpn1 di 22:50 0 komentar

21 November 2008

TIK

Kemajuan TIK meningkat dengan cepat, terutama dalam jaringan komputer. Sekitar tahun 1988, jaringan komputer sudah mulai digunakan di universitas-universitas dan perusahaan. Dengan jaringan komputer tersebut segala pekerjaan diharapkan selesai dengan cepat. Jaringan komputer ini mampu menghubungkan komputer satu dengan komputer lainnya. Salah satu contoh jaringan komputer adalah internet.

Internet dapat di gunakan untuk berbagai macam hal, contoh seperti mencari bahan naskah atau mengakses fitur lain. Internet merupakan sebuah jaringan raksasa yang telah menjadi realitas dalam kebutuhan informasi dan komunikasi jutaan manusia di dunia ini.

Teknologi jaringan yang semakin maju perlu didukung oleh perangkat keras dan perangkat lunak jaringannya. Dalam perkembangan pertamanya, jaringan komputer masih menggunakan kabel koaksial. Namun kini jaringan komputer digunakan dengan kabeldari fiber optics atau yang disebut dengan serat optik, atau bisa juga dengan komunikasi tanpa kabel atau yang disebut dengan nirkabel.

Awal konsep jaringan komputer lahir pada tahun 1940-an di Amerika Serikat dari proyek pengembangan komputer Model I di Laboraturium Bell dan juga dari grup riset Harvard University yang dipimpin oleh Profesor Howard Aiken. Pada tahun 1950-an muncul komputer basar yang diberi nama superkomputer.

Komputer dapat melayani beberapa terminal komputer. Pada saat ini konsep jaringan dikenal dengan nama TSS atau Time Sharing System (waktu berbagi sistem) dimana pada sistem ini jaringan beberapa komputer terminal terhubung secara seri ke sebuah beberapa komputer utama atau host computer

Pertemuan 5

Teknologi Informasi dan Komunikasi

Industri teknologi informasi dan komunikasi (ICT) telah menjadi bendera baru bagi Norway. Saat ini industri ICT merupakan industri land-based kedua terbesar di Norway berdasarkan pergantian yang terjadi (turnover), dan tidak hanya menciptakan kekayaan tapi juga merupakan pemasok vital bagi sektor bisnis dan umum lainnya. Industri ini terdiri dari berbagai jenis perusahaan berteknologi tinggi yang menciptakan jenis telekomunikasi baru, perangkat keras dan lunak ICT, produk elektronik untuk industri, serta menyediakan layanan konsultasi.

Norwegia merupakan salah satu pengguna ICT per-kapita terbesar di dunia, dengan infrastruktur yang mencakup sistem yang dikembangkan dengan baik dan jaringan kabel fiber optik untuk transmisi dijital. Kapasitas jaringan komunikasi Norwegia mengalami perkembangan pesat, dan sektor telekomunikasi telah melahirkan peneliti dan perusahaan yang mampu bersaing dalam skala internasional. Rangkaian produk yang tersedia termasuk sistem komunikasi satelit, sistem penempatan global, sistem telepon selular, sistem pengelolaan jaringan, sistem transmisi dan teknologi fiber optik.

Perancang perangkat keras Norwegia merupakan kelompok yang inovatif, dan telah mengembangkan beragam produk khusus, seperti sistem konferensi melalui video, peralatan multimedia, transmiter radio dijital, solusi penyimpanan data, terminal kartu kredit dan penyedia tenaga listrik.

Revolusi perangkat lunak Norwegia dipicu oleh perkembangan industri tradisional, seperti minyak, jasa pengiriman dan perikanan. Kebutuhan dari sektor tersebut, serta kemampuan menciptakan serta membiayai solusi dengan teknologi tinggi dengan menekan biaya telah mendorong pengembangan perangkat lunak baru dan terintegrasi. Saat ini terdapat banyak perusahaan di industri ICT yang memasok solusi perangkat lunak dan moduler (termasuk data, customer relations, administratif, dan sistem pengelolaan keuangan) ke hampir seluruh sektor swasta dan publik. Perusahaan Norwegia juga telah menjadi pelopor di bidang telemedicine dan belajar jarak jauh. Solusi canggih mulai dilirik oleh pembeli internasional.

Internet banyak digunakan di Norwegia, dan terus mengalami pertumbuhan secara cepat. Perusahaan Norwegia merupakan yang terdepan dalam bidang teknologi Internet, termasuk pengembangan situs multi fungsi dan Intranet, Web browsers yang sangat cepat, permainan on-line dan solusi e-commerce. Industri ICT Norwegia unggul dalam menemulkan solusi yang mudah digunakan, yang memprioritaskan pengguna dan interaksi antar individu.

SMPN 1 Denpasar adalah sekolah tertua di Bali yang terletak di Jl. Surapati no. 2 Dps. Smpn 1 Dps merupakan sekolah terfavorite dan yang pertama kali menjadi sekolah rintisan bertaraf internasional. Dengan dikepalai oleh guru ajeg bali yaitu Bapak Rimbya Temaja dan wakilnya ialah Pak Rai Teja. SMPN 1 Dps memiliki 3 jenis kelas diantaranya :

- Acceleration/percepatan yang hanya 2 tahun saja namun murid-murid yang ingin masuk

kelas percepatan harus mengikuti seleksi terlebih dahulu

- SBI/Sistem Bertaraf Internasional 3 tahun bersekolah namun dengan menggunakan

bahasa inggris yang aktif

- Bilingual/Reguler 3tahun bersekolah. Reguler tidak jauh bedanya dengan SBI namun bahasa

inggrisnya masih pasif

Spp nya pun tentu berbeda menurut jenis kelasnya untuk Acceleration Rp 300.000,00 untuk SBI Rp 250.000,00 dan untuk Bilingual Rp 200.000,00. Dengan sistem bertaraf internasional, pembelajaran di SMPN 1 Dps menggunakan laptop dan sistem pemberian tugasnya melalui email.

Disamping semua itu, SMPN 1 Dps juga memiliki kerugian yaitu karena letaknya sangat dekat dengan pusat kota sehingga banyak kendaraan yang berlalu lalang. hal itu sangat mengganggu proses pembelajaran di SMPN 1 Dps.

A computer is a machine that manipulates data according to a list of instructions.

The first devices that resemble modern computers date to the mid-20th century (1940–1945), although the computer concept and various machines similar to computers existed earlier. Early electronic computers were the size of a large room, consuming as much power as several hundred modern personal computers (PC).[1] Modern computers are based on tiny integrated circuits and are millions to billions of times more capable while occupying a fraction of the space.[2] Today, simple computers may be made small enough to fit into a wristwatch and be powered from a watch battery. Personal computers, in various forms, are icons of the Information Age and are what most people think of as "a computer"; however, the most common form of computer in use today is the embedded computer. Embedded computers are small, simple devices that are used to control other devices — for example, they may be found in machines ranging from fighter aircraft to industrial robots, digital cameras, and children's toys.

The ability to store and execute lists of instructions called programs makes computers extremely versatile and distinguishes them from calculators. The Church–Turing thesis is a mathematical statement of this versatility: any computer with a certain minimum capability is, in principle, capable of performing the same tasks that any other computer can perform. Therefore, computers with capability and complexity ranging from that of a personal digital assistant to a supercomputer are all able to perform the same computational tasks given enough time and storage capacity.

Contents[hide] |

History of computing

- Main article: History of computer hardware

It is difficult to identify any one device as the earliest computer, partly because the term "computer" has been subject to varying interpretations over time. Originally, the term "computer" referred to a person who performed numerical calculations (a human computer), often with the aid of a mechanical calculating device.

The history of the modern computer begins with two separate technologies - that of automated calculation and that of programmability.

Examples of early mechanical calculating devices included the abacus, the slide rule and arguably the astrolabe and the Antikythera mechanism (which dates from about 150-100 BC). Hero of Alexandria (c. 10–70 AD) built a mechanical theater which performed a play lasting 10 minutes and was operated by a complex system of ropes and drums that might be considered to be a means of deciding which parts of the mechanism performed which actions and when.[3] This is the essence of programmability.

The "castle clock", an astronomical clock invented by Al-Jazari in 1206, is considered to be the earliest programmable analog computer.[4] It displayed the zodiac, the solar and lunar orbits, a crescent moon-shaped pointer travelling across a gateway causing automatic doors to open every hour,[5][6] and five robotic musicians who play music when struck by levers operated by a camshaft attached to a water wheel. The length of day and night could be re-programmed every day in order to account for the changing lengths of day and night throughout the year.[4]

The end of the Middle Ages saw a re-invigoration of European mathematics and engineering, and Wilhelm Schickard's 1623 device was the first of a number of mechanical calculators constructed by European engineers. However, none of those devices fit the modern definition of a computer because they could not be programmed.

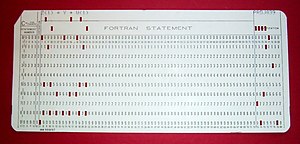

In 1801, Joseph Marie Jacquard made an improvement to the textile loom that used a series of punched paper cards as a template to allow his loom to weave intricate patterns automatically. The resulting Jacquard loom was an important step in the development of computers because the use of punched cards to define woven patterns can be viewed as an early, albeit limited, form of programmability.

It was the fusion of automatic calculation with programmability that produced the first recognizable computers. In 1837, Charles Babbage was the first to conceptualize and design a fully programmable mechanical computer that he called "The Analytical Engine".[7] Due to limited finances, and an inability to resist tinkering with the design, Babbage never actually built his Analytical Engine.

Large-scale automated data processing of punched cards was performed for the U.S. Census in 1890 by tabulating machines designed by Herman Hollerith and manufactured by the Computing Tabulating Recording Corporation, which later became IBM. By the end of the 19th century a number of technologies that would later prove useful in the realization of practical computers had begun to appear: the punched card, Boolean algebra, the vacuum tube (thermionic valve) and the teleprinter.

During the first half of the 20th century, many scientific computing needs were met by increasingly sophisticated analog computers, which used a direct mechanical or electrical model of the problem as a basis for computation. However, these were not programmable and generally lacked the versatility and accuracy of modern digital computers.

| Name | First operational | Numeral system | Computing mechanism | Programming | Turing complete |

|---|---|---|---|---|---|

| Zuse Z3 (Germany) | May 1941 | Binary | Electro-mechanical | Program-controlled by punched film stock | Yes (1998) |

| Atanasoff–Berry Computer (US) | mid-1941 | Binary | Electronic | Not programmable—single purpose | No |

| Colossus (UK) | January 1944 | Binary | Electronic | Program-controlled by patch cables and switches | No |

| Harvard Mark I – IBM ASCC (US) | 1944 | Decimal | Electro-mechanical | Program-controlled by 24-channel punched paper tape (but no conditional branch) | No |

| ENIAC (US) | November 1945 | Decimal | Electronic | Program-controlled by patch cables and switches | Yes |

| Manchester Small-Scale Experimental Machine (UK) | June 1948 | Binary | Electronic | Stored-program in Williams cathode ray tube memory | Yes |

| Modified ENIAC (US) | September 1948 | Decimal | Electronic | Program-controlled by patch cables and switches plus a primitive read-only stored programming mechanism using the Function Tables as program ROM | Yes |

| EDSAC (UK) | May 1949 | Binary | Electronic | Stored-program in mercury delay line memory | Yes |

| Manchester Mark I (UK) | October 1949 | Binary | Electronic | Stored-program in Williams cathode ray tube memory and magnetic drum memory | Yes |

| CSIRAC (Australia) | November 1949 | Binary | Electronic | Stored-program in mercury delay line memory | Yes |

A succession of steadily more powerful and flexible computing devices were constructed in the 1930s and 1940s, gradually adding the key features that are seen in modern computers. The use of digital electronics (largely invented by Claude Shannon in 1937) and more flexible programmability were vitally important steps, but defining one point along this road as "the first digital electronic computer" is difficult (Shannon 1940). Notable achievements include:

- Konrad Zuse's electromechanical "Z machines". The Z3 (1941) was the first working machine featuring binary arithmetic, including floating point arithmetic and a measure of programmability. In 1998 the Z3 was proved to be Turing complete, therefore being the world's first operational computer.

- The non-programmable Atanasoff–Berry Computer (1941) which used vacuum tube based computation, binary numbers, and regenerative capacitor memory.

- The secret British Colossus computers (1943)[8], which had limited programmability but demonstrated that a device using thousands of tubes could be reasonably reliable and electronically reprogrammable. It was used for breaking German wartime codes.

- The Harvard Mark I (1944), a large-scale electromechanical computer with limited programmability.

- The U.S. Army's Ballistics Research Laboratory ENIAC (1946), which used decimal arithmetic and is sometimes called the first general purpose electronic computer (since Konrad Zuse's Z3 of 1941 used electromagnets instead of electronics). Initially, however, ENIAC had an inflexible architecture which essentially required rewiring to change its programming.

Several developers of ENIAC, recognizing its flaws, came up with a far more flexible and elegant design, which came to be known as the "stored program architecture" or von Neumann architecture. This design was first formally described by John von Neumann in the paper First Draft of a Report on the EDVAC, distributed in 1945. A number of projects to develop computers based on the stored-program architecture commenced around this time, the first of these being completed in Great Britain. The first to be demonstrated working was the Manchester Small-Scale Experimental Machine (SSEM or "Baby"), while the EDSAC, completed a year after SSEM, was the first practical implementation of the stored program design. Shortly thereafter, the machine originally described by von Neumann's paper—EDVAC—was completed but did not see full-time use for an additional two years.

Nearly all modern computers implement some form of the stored-program architecture, making it the single trait by which the word "computer" is now defined. While the technologies used in computers have changed dramatically since the first electronic, general-purpose computers of the 1940s, most still use the von Neumann architecture.

Computers that used vacuum tubes as their electronic elements were in use throughout the 1950s. Vacuum tube electronics were largely replaced in the 1960s by transistor-based electronics, which are smaller, faster, cheaper to produce, require less power, and are more reliable. In the 1970s, integrated circuit technology and the subsequent creation of microprocessors, such as the Intel 4004, further decreased size and cost and further increased speed and reliability of computers. By the 1980s, computers became sufficiently small and cheap to replace simple mechanical controls in domestic appliances such as washing machines. The 1980s also witnessed home computers and the now ubiquitous personal computer. With the evolution of the Internet, personal computers are becoming as common as the television and the telephone in the household.

Stored program architecture

- Main articles: Computer program and Computer programming

The defining feature of modern computers which distinguishes them from all other machines is that they can be programmed. That is to say that a list of instructions (the program) can be given to the computer and it will store them and carry them out at some time in the future.

In most cases, computer instructions are simple: add one number to another, move some data from one location to another, send a message to some external device, etc. These instructions are read from the computer's memory and are generally carried out (executed) in the order they were given. However, there are usually specialized instructions to tell the computer to jump ahead or backwards to some other place in the program and to carry on executing from there. These are called "jump" instructions (or branches). Furthermore, jump instructions may be made to happen conditionally so that different sequences of instructions may be used depending on the result of some previous calculation or some external event. Many computers directly support subroutines by providing a type of jump that "remembers" the location it jumped from and another instruction to return to the instruction following that jump instruction.

Program execution might be likened to reading a book. While a person will normally read each word and line in sequence, they may at times jump back to an earlier place in the text or skip sections that are not of interest. Similarly, a computer may sometimes go back and repeat the instructions in some section of the program over and over again until some internal condition is met. This is called the flow of control within the program and it is what allows the computer to perform tasks repeatedly without human intervention.

Comparatively, a person using a pocket calculator can perform a basic arithmetic operation such as adding two numbers with just a few button presses. But to add together all of the numbers from 1 to 1,000 would take thousands of button presses and a lot of time—with a near certainty of making a mistake. On the other hand, a computer may be programmed to do this with just a few simple instructions. For example:

mov #0,sum ; set sum to 0

mov #1,num ; set num to 1

loop: add num,sum ; add num to sum

add #1,num ; add 1 to num

cmp num,#1000 ; compare num to 1000

ble loop ; if num <= 1000, go back to 'loop' halt ; end of program. stop running

Once told to run this program, the computer will perform the repetitive addition task without further human intervention. It will almost never make a mistake and a modern PC can complete the task in about a millionth of a second.[9]

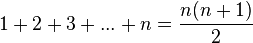

However, computers cannot "think" for themselves in the sense that they only solve problems in exactly the way they are programmed to. An intelligent human faced with the above addition task might soon realize that instead of actually adding up all the numbers one can simply use the equation

and arrive at the correct answer (500,500) with little work.[10] In other words, a computer programmed to add up the numbers one by one as in the example above would do exactly that without regard to efficiency or alternative solutions.

Programs

In practical terms, a computer program may run from just a few instructions to many millions of instructions, as in a program for a word processor or a web browser. A typical modern computer can execute billions of instructions per second (gigahertz or GHz) and rarely make a mistake over many years of operation. Large computer programs comprising several million instructions may take teams of programmers years to write, thus the probability of the entire program having been written without error is highly unlikely.

Errors in computer programs are called "bugs". Bugs may be benign and not affect the usefulness of the program, or have only subtle effects. But in some cases they may cause the program to "hang" - become unresponsive to input such as mouse clicks or keystrokes, or to completely fail or "crash". Otherwise benign bugs may sometimes may be harnessed for malicious intent by an unscrupulous user writing an "exploit" - code designed to take advantage of a bug and disrupt a program's proper execution. Bugs are usually not the fault of the computer. Since computers merely execute the instructions they are given, bugs are nearly always the result of programmer error or an oversight made in the program's design.[11]

In most computers, individual instructions are stored as machine code with each instruction being given a unique number (its operation code or opcode for short). The command to add two numbers together would have one opcode, the command to multiply them would have a different opcode and so on. The simplest computers are able to perform any of a handful of different instructions; the more complex computers have several hundred to choose from—each with a unique numerical code. Since the computer's memory is able to store numbers, it can also store the instruction codes. This leads to the important fact that entire programs (which are just lists of instructions) can be represented as lists of numbers and can themselves be manipulated inside the computer just as if they were numeric data. The fundamental concept of storing programs in the computer's memory alongside the data they operate on is the crux of the von Neumann, or stored program, architecture. In some cases, a computer might store some or all of its program in memory that is kept separate from the data it operates on. This is called the Harvard architecture after the Harvard Mark I computer. Modern von Neumann computers display some traits of the Harvard architecture in their designs, such as in CPU caches.

While it is possible to write computer programs as long lists of numbers (machine language) and this technique was used with many early computers,[12] it is extremely tedious to do so in practice, especially for complicated programs. Instead, each basic instruction can be given a short name that is indicative of its function and easy to remember—a mnemonic such as ADD, SUB, MULT or JUMP. These mnemonics are collectively known as a computer's assembly language. Converting programs written in assembly language into something the computer can actually understand (machine language) is usually done by a computer program called an assembler. Machine languages and the assembly languages that represent them (collectively termed low-level programming languages) tend to be unique to a particular type of computer. For instance, an ARM architecture computer (such as may be found in a PDA or a hand-held videogame) cannot understand the machine language of an Intel Pentium or the AMD Athlon 64 computer that might be in a PC.[13]

Though considerably easier than in machine language, writing long programs in assembly language is often difficult and error prone. Therefore, most complicated programs are written in more abstract high-level programming languages that are able to express the needs of the computer programmer more conveniently (and thereby help reduce programmer error). High level languages are usually "compiled" into machine language (or sometimes into assembly language and then into machine language) using another computer program called a compiler.[14] Since high level languages are more abstract than assembly language, it is possible to use different compilers to translate the same high level language program into the machine language of many different types of computer. This is part of the means by which software like video games may be made available for different computer architectures such as personal computers and various video game consoles.

The task of developing large software systems is an immense intellectual effort. Producing software with an acceptably high reliability on a predictable schedule and budget has proved historically to be a great challenge; the academic and professional discipline of software engineering concentrates specifically on this problem.

Example

Suppose a computer is being employed to drive a traffic light. A simple stored program might say:

- Turn off all of the lights

- Turn on the red light

- Wait for sixty seconds

- Turn off the red light

- Turn on the green light

- Wait for sixty seconds

- Turn off the green light

- Turn on the yellow light

- Wait for two seconds

- Turn off the yellow light

- Jump to instruction number (2)

With this set of instructions, the computer would cycle the light continually through red, green, yellow and back to red again until told to stop running the program.

However, suppose there is a simple on/off switch connected to the computer that is intended to be used to make the light flash red while some maintenance operation is being performed. The program might then instruct the computer to:

- Turn off all of the lights

- Turn on the red light

- Wait for sixty seconds

- Turn off the red light

- Turn on the green light

- Wait for sixty seconds

- Turn off the green light

- Turn on the yellow light

- Wait for two seconds

- Turn off the yellow light

- If the maintenance switch is NOT turned on then jump to instruction number 2

- Turn on the red light

- Wait for one second

- Turn off the red light

- Wait for one second

- Jump to instruction number 11

In this manner, the computer is either running the instructions from number (2) to (11) over and over or its running the instructions from (11) down to (16) over and over, depending on the position of the switch.[15]

How computers work

- Main articles: Central processing unit and Microprocessor

A general purpose computer has four main sections: the arithmetic and logic unit (ALU), the control unit, the memory, and the input and output devices (collectively termed I/O). These parts are interconnected by busses, often made of groups of wires.

The control unit, ALU, registers, and basic I/O (and often other hardware closely linked with these) are collectively known as a central processing unit (CPU). Early CPUs were composed of many separate components but since the mid-1970s CPUs have typically been constructed on a single integrated circuit called a microprocessor.

Control unit

- Main articles: CPU design and Control unit

The control unit (often called a control system or central controller) directs the various components of a computer. It reads and interprets (decodes) instructions in the program one by one. The control system decodes each instruction and turns it into a series of control signals that operate the other parts of the computer.[16] Control systems in advanced computers may change the order of some instructions so as to improve performance.

A key component common to all CPUs is the program counter, a special memory cell (a register) that keeps track of which location in memory the next instruction is to be read from.[17]

The control system's function is as follows—note that this is a simplified description, and some of these steps may be performed concurrently or in a different order depending on the type of CPU:

- Read the code for the next instruction from the cell indicated by the program counter.

- Decode the numerical code for the instruction into a set of commands or signals for each of the other systems.

- Increment the program counter so it points to the next instruction.

- Read whatever data the instruction requires from cells in memory (or perhaps from an input device). The location of this required data is typically stored within the instruction code.

- Provide the necessary data to an ALU or register.

- If the instruction requires an ALU or specialized hardware to complete, instruct the hardware to perform the requested operation.

- Write the result from the ALU back to a memory location or to a register or perhaps an output device.

- Jump back to step (1).

Since the program counter is (conceptually) just another set of memory cells, it can be changed by calculations done in the ALU. Adding 100 to the program counter would cause the next instruction to be read from a place 100 locations further down the program. Instructions that modify the program counter are often known as "jumps" and allow for loops (instructions that are repeated by the computer) and often conditional instruction execution (both examples of control flow).

It is noticeable that the sequence of operations that the control unit goes through to process an instruction is in itself like a short computer program - and indeed, in some more complex CPU designs, there is another yet smaller computer called a microsequencer that runs a microcode program that causes all of these events to happen.

Biodata : nama saya i komang adi suryawan saya sering dipanggil adi saya tinggal di denpasar saya lahir di denpasar,12 juli 1996 alamat saya di jalan sedap malam no 21 gang seruni no 21 hobi saya renang saya bersekolah di smpn 1 Denpasar .Saya kelas 7d nomor absen 02 cita-cita saya ingin menjadi pns. saya beragama hindu semoga biodata ini berguna bagi anda Terima kasih karena telah membuka blog ini

Logo Spenza

Mengenai Saya

- Lord Adi

- Kesiman, Bali,Denpasar Timur, Indonesia

- Saya bersekolah di smpn 1 denpasar Saya lahir di Denpasar 12 Juli 1996 Statusku masih Bujangan/Jomblo Saya cukup pintar di kelas Aku suka film abdel dan temon Aku suka bermain WarCraft Itu sudah sehari-hari Heroku adalah Lord of Avernus